Master the role of AI in fraud detection for finance pros

Traditional fraud detection methods leave finance professionals chasing ghosts. Rule-based systems generate overwhelming false positives while sophisticated fraudsters slip through undetected. The average financial institution reviews thousands of alerts daily, yet less than 1% represent actual fraud. This inefficiency drains resources and erodes trust. Artificial intelligence transforms this landscape by learning fraud patterns dynamically, adapting to emerging threats in real time, and dramatically improving detection accuracy. This article explores how AI technologies, layered risk frameworks, and hybrid approaches empower fraud analysts to detect threats faster, reduce false alarms, and protect assets more effectively.

Table of Contents

- Key takeaways

- Understanding AI technologies for fraud detection

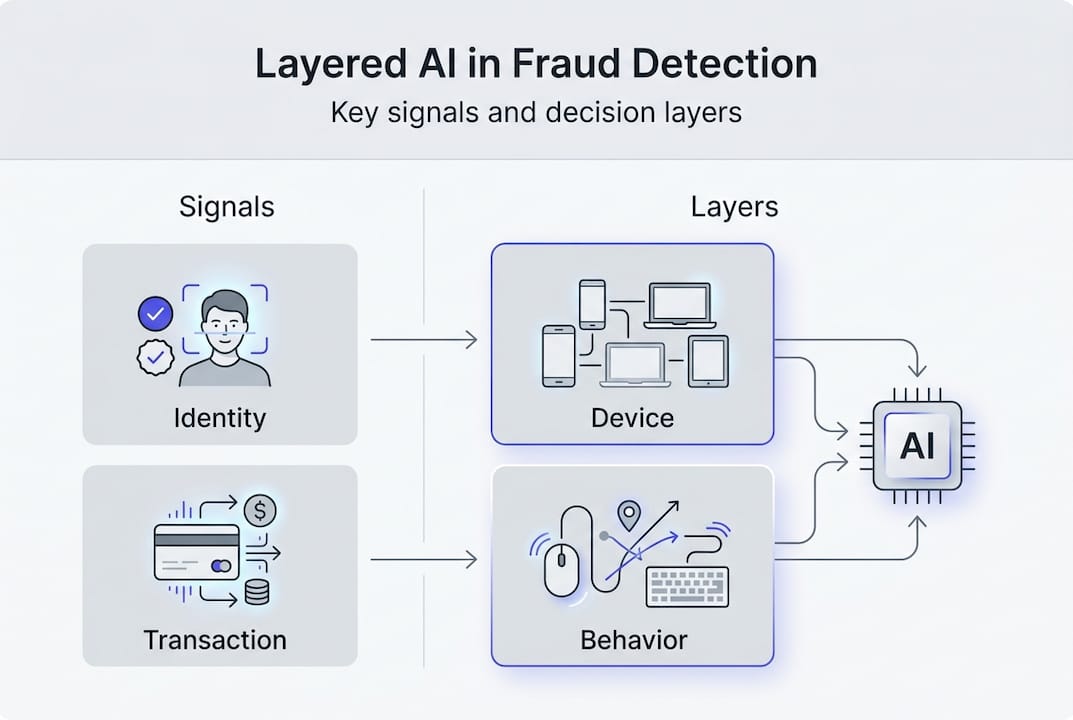

- Layered risk scoring frameworks: combining signals for precision

- Navigating challenges and advancing hybrid approaches

- Practical guidance for implementing AI-driven fraud detection

- Discover advanced AI-powered solutions with BankStatementFlow

- FAQ about the role of AI in fraud detection

Key Takeaways

| Point | Details |

|---|---|

| Hybrid AI models | Hybrid AI integrates supervised, unsupervised, and graph NLP components to detect both known and emerging fraud patterns. |

| Layered risk scoring | Layered frameworks aggregate signals from multiple dimensions with weighted scores to increase detection precision. |

| Rules plus AI | Hybrid approaches with rules reduce false positives while maintaining compliance. |

| Explainability and oversight | Explainability tools and human in the loop oversight help analysts trust AI decisions. |

| Data quality challenges | Class imbalance, data quality issues, and adversarial tactics continue to challenge detection models. |

Understanding AI technologies for fraud detection

AI fraud detection employs supervised machine learning algorithms like Random Forest and XGBoost trained on labeled historical fraud data. These models learn patterns distinguishing legitimate from fraudulent transactions by analyzing features such as transaction amounts, merchant categories, geographic locations, and user behavior sequences. When new transactions arrive, supervised models score them based on similarity to known fraud patterns.

Unsupervised learning complements this by identifying anomalies without prior fraud labels. Clustering algorithms group similar transactions, flagging outliers that deviate significantly from normal behavior. Isolation forests detect rare events by measuring how easily data points separate from the majority. These techniques catch novel fraud schemes that supervised models miss because they lack historical examples.

Hybrid models push detection further by incorporating graph neural networks and natural language processing. Graph AI maps relationships between accounts, devices, and merchants to uncover fraud rings and coordinated attacks. A single fraudster controlling multiple accounts creates network patterns invisible to transaction-level analysis. NLP analyzes text in transaction descriptions, customer communications, and social media to detect phishing attempts or identity theft signals.

Modern fraud detection systems integrate multiple AI components working in concert:

- Predictive analytics forecasting fraud probability based on historical patterns and current conditions

- Anomaly detection engines monitoring deviations from established behavioral baselines

- Graph databases mapping entity relationships to expose hidden connections

- Deep learning models processing unstructured data like images and documents

- Real-time scoring engines evaluating transactions within milliseconds

These technologies work together because fraud evolves constantly. Fraudsters adapt tactics when detection systems catch their schemes. Static rules become obsolete quickly, but AI models retrain on new data to stay current. The combination of practical AI applications in banking with continuous learning creates adaptive defenses. Financial institutions leveraging machine learning in fintech operations gain significant advantages in threat detection speed and accuracy.

Pro Tip: Start with supervised models for known fraud types, then layer unsupervised detection to catch emerging threats your labeled data does not cover.

Layered risk scoring frameworks: combining signals for precision

Effective fraud detection requires more than isolated AI models. Layered frameworks evaluate 47 fraud signals across five distinct dimensions, assigning weighted scores that aggregate into overall risk assessments. This approach mirrors how experienced analysts think, examining multiple evidence types before reaching conclusions.

The five-layer model structures evaluation systematically:

| Layer | Example Signals | Typical Weight |

|---|---|---|

| Identity | Email verification, phone validation, document authenticity | 20% |

| Access | Device fingerprint, IP reputation, login velocity | 15% |

| Behavior | Transaction patterns, session duration, navigation flow | 25% |

| Transaction | Amount anomalies, merchant risk, cross-border flags | 30% |

| Network | Linked accounts, shared devices, graph connections | 10% |

Each signal contributes proportionally based on its fraud correlation strength. Transaction anomalies carry heavy weight because unusual amounts or merchant categories strongly indicate fraud. Behavior patterns matter significantly since fraudsters rush through interfaces differently than legitimate users. Identity verification provides foundational assurance but alone proves insufficient.

Scoring thresholds trigger specific actions based on accumulated risk:

- 0-25 points: Transaction approved automatically with standard monitoring

- 26-50 points: Enhanced monitoring activated, flagged for periodic review

- 51-74 points: Step-up authentication required before approval

- 75+ points: Transaction blocked pending manual investigation

Weighted combination scoring delivers superior results compared to single-signal decisions. A high-value transaction from a new device might score 45 points individually, but if the user passes behavioral biometrics and has strong identity verification, the combined score drops to 30. Conversely, multiple weak signals accumulate into strong fraud indicators that individual checks miss.

This methodology reduces false positives dramatically because legitimate edge cases typically fail only one or two checks, not comprehensive evaluation. A customer traveling abroad triggers location alerts but passes behavior and identity layers. Fraudsters struggle to fake signals across all dimensions simultaneously.

Pro Tip: Regularly audit signal weights based on compliance requirements and regulatory standards to maintain both detection effectiveness and legal defensibility.

Navigating challenges and advancing hybrid approaches

AI fraud detection faces substantial obstacles that pure technology cannot solve alone. Extreme class imbalance affects model training because fraud represents less than 1% of transactions at most institutions. Machine learning algorithms trained on such skewed data often optimize by predicting everything as legitimate, achieving 99% accuracy while catching zero fraud.

Adversarial evolution compounds this challenge. Fraudsters actively probe detection systems, learning which behaviors trigger alerts and adjusting tactics accordingly. They test small transactions before attempting large thefts, rotate through compromised accounts, and mimic legitimate user patterns. This cat-and-mouse dynamic means yesterday’s perfect model becomes today’s liability.

Data quality issues undermine even sophisticated algorithms. Customer relationship management systems contain incomplete profiles, outdated contact information, and inconsistent formatting across channels. When AI models train on flawed data, they learn incorrect patterns and generate unreliable scores. Garbage in, garbage out applies ruthlessly to fraud detection.

The explainability-imbalance paradox creates tension between model performance and transparency. Complex ensemble models achieve highest accuracy but operate as black boxes, making decisions analysts cannot explain to customers or regulators. Simpler interpretable models sacrifice detection power for clarity. This tradeoff forces difficult choices between catching fraud and maintaining trust.

Purely rules-based systems offer transparency but suffer fatal weaknesses:

- Static thresholds miss evolving fraud tactics

- High false positive rates overwhelm investigation teams

- Manual rule updates lag behind threat emergence

- Limited ability to detect subtle pattern combinations

Purely AI-driven systems provide adaptability but introduce risks:

- Black-box decisions undermine regulatory compliance

- Bias in training data perpetuates unfair outcomes

- Adversarial attacks manipulate model predictions

- Lack of human judgment for nuanced situations

Hybrid AI and rules-based approaches reduce false positives by 70% and fraud losses by 60% compared to single-method systems. This combination leverages AI’s pattern recognition while maintaining rules-based guardrails for compliance and interpretability. Machine learning handles complex signal synthesis while explicit rules enforce regulatory requirements and business policies.

Hybrid architectures route decisions strategically. Clear-cut cases matching established rules process automatically. Ambiguous scenarios receive AI scoring with human review for final decisions. This division optimizes both speed and accuracy, applying expensive human expertise where it matters most.

Pro Tip: Implement explainable AI tools like SHAP or LIME alongside your detection models, then pair machine-generated insights with compliance-focused human oversight to balance performance with accountability.

Practical guidance for implementing AI-driven fraud detection

Successful deployment requires systematic execution across technical and organizational dimensions. Follow this implementation sequence:

- Consolidate data sources into unified feature stores providing real-time access to customer profiles, transaction history, device intelligence, and external threat feeds

- Select model architectures matching your fraud landscape, starting with gradient boosting for tabular data and graph networks for relationship analysis

- Integrate explainability frameworks from project inception, not as afterthoughts, ensuring every prediction includes interpretable reasoning

- Establish human-in-loop workflows where analysts review model decisions, provide feedback, and handle edge cases requiring judgment

- Define evaluation metrics emphasizing Precision-Recall Area Under Curve over simple accuracy for imbalanced datasets

- Build continuous retraining pipelines that update models as fraud patterns evolve and new attack vectors emerge

Real-time feature stores prove critical for effective detection. Fraud happens in milliseconds, requiring instant access to current risk signals. Batch-updated data warehouses introduce dangerous delays where fraudsters exploit stale information. Modern feature platforms serve pre-computed aggregations and streaming updates simultaneously, enabling sub-second scoring without sacrificing signal richness.

Human-in-loop approaches transform AI from replacement to augmentation. Analysts review high-score transactions flagged by models, applying contextual knowledge machines lack. A large wire transfer to a new beneficiary might score high algorithmically but represent legitimate business expansion the customer mentioned last week. Human reviewers provide this context while also labeling edge cases that retrain models.

Practitioners should prioritize PRAUC over accuracy when evaluating fraud detection performance. Precision-Recall curves account for class imbalance by focusing on the minority fraud class. A model achieving 99% accuracy by predicting everything as legitimate earns terrible PRAUC scores because it catches no fraud. This metric forces optimization toward actual detection capability.

“Effective fraud detection balances automated efficiency with human expertise. AI handles scale and pattern recognition while analysts provide judgment, context, and continuous improvement through feedback loops that make systems smarter over time.”

Investment in AI-powered financial technology infrastructure pays dividends beyond fraud prevention. The same feature engineering, model deployment, and monitoring capabilities support credit risk assessment, customer segmentation, and operational optimization. Organizations building robust AI foundations gain competitive advantages across multiple domains.

Data quality initiatives deserve equal priority with algorithm selection. Dedicate resources to cleaning customer records, standardizing transaction coding, and enriching profiles with external data sources. Improved accuracy in financial document processing directly enhances fraud detection by providing cleaner training data and more reliable real-time features.

Discover advanced AI-powered solutions with BankStatementFlow

Fraud detection depends fundamentally on data quality and processing speed. BankStatementFlow delivers AI-powered financial document conversion that transforms unstructured bank statements, invoices, and receipts into structured Excel, CSV, JSON, and XML formats with up to 99% accuracy. This automation eliminates manual data entry errors that compromise fraud detection systems while accelerating document processing from hours to seconds.

Finance professionals and fraud analysts gain immediate benefits from intelligent document processing. Clean, structured transaction data feeds directly into detection models, improving training quality and real-time scoring accuracy. The platform handles password-protected PDFs, multiple languages, and regional formats, supporting global fraud prevention operations. API access enables seamless integration with existing compliance workflows, while enterprise security features protect sensitive financial information throughout processing.

Explore how AI-powered bank statement conversion strengthens your fraud detection infrastructure by ensuring high-quality data flows into analytics systems automatically, reducing manual effort while increasing detection precision.

FAQ about the role of AI in fraud detection

What types of AI models are used in fraud detection?

Fraud detection systems employ supervised learning models like Random Forest and XGBoost trained on labeled fraud examples, unsupervised methods like clustering and isolation forests for anomaly detection, and hybrid approaches incorporating graph neural networks for relationship analysis and natural language processing for text-based signals. These models work together to catch both known fraud patterns and novel threats.

How do hybrid AI and rules-based systems reduce false positives?

Hybrid systems combine AI’s adaptive pattern recognition with rules-based compliance guardrails, routing clear-cut cases through automated rules while applying machine learning to ambiguous scenarios. This strategic division reduces false positives by 70% because AI handles complex signal combinations while rules prevent alerts on known legitimate edge cases, optimizing both accuracy and efficiency.

What challenges should I prepare for when deploying AI fraud detection?

Key challenges include extreme class imbalance where fraud represents less than 1% of transactions, adversarial fraudsters who adapt tactics to evade detection, data quality issues from incomplete or inconsistent customer records, and the explainability-imbalance paradox where accurate models lack transparency. Address these through hybrid architectures, continuous retraining, data quality initiatives, and explainable AI tools.

How important is explainability in AI-driven fraud systems?

Explainability proves essential for regulatory compliance, customer trust, and analyst effectiveness. Regulators require institutions to justify adverse decisions, customers demand transparency when transactions are blocked, and analysts need interpretable insights to investigate alerts efficiently. Tools like SHAP and LIME provide model explanations without sacrificing detection accuracy, enabling defensible decisions.

Can AI detect advanced fraud such as deepfakes or generative AI scams?

AI detects sophisticated fraud by analyzing behavioral patterns, device fingerprints, and network relationships that deepfakes and generative content cannot replicate perfectly. While fraudsters use AI to create convincing fake identities or phishing messages, detection systems counter with multimodal analysis combining biometric verification, transaction behavior, and graph analysis to expose inconsistencies across multiple evidence layers simultaneously.

Recommended

- AI in compliance: boost finance regulatory adherence 2026 - BankStatementFlow Blog

- The Future of FinTech: How AI is Reshaping Financial Services - BankStatementFlow Blog

- AI in Finance: 99% Accuracy Transforms Document Processing - BankStatementFlow Blog

- Why use AI for bank statements: accuracy in 2026 - BankStatementFlow Blog

- How Artificial Intelligence has Changed the Forex Trade